Automated Ingestion of PII from 43 Data Vendors

The client is a public records provider for consumers & businesses seeking details on people in the US. Their business depends on regular data updates from 43 public and commercial data providers.

The client needed a system that works continuously:

- Checks sources regularly

- Downloads new data when it appears

- Unpacks and decodes loaded files

- Retries when the loading fails

- Triggers the next step of data processing pipeline in Airflow DAG

Key Challenges in the Data Ingestion Project

Source variability

There was no standard pattern across vendors. Some sources allowed direct downloads. Others required login, navigation, filters, and multi-step actions before a file became available.

Unstable inputs

Files could:

- Fail mid-download

- Appear later than expected

- Be replaced under the same name with a different size

- Arrive in large batches or as single large archives

Volume and frequency

Some vendors delivered one large file. Others delivered hundreds of files per update. Checks had to run frequently to avoid missing changes, even when updates were infrequent.

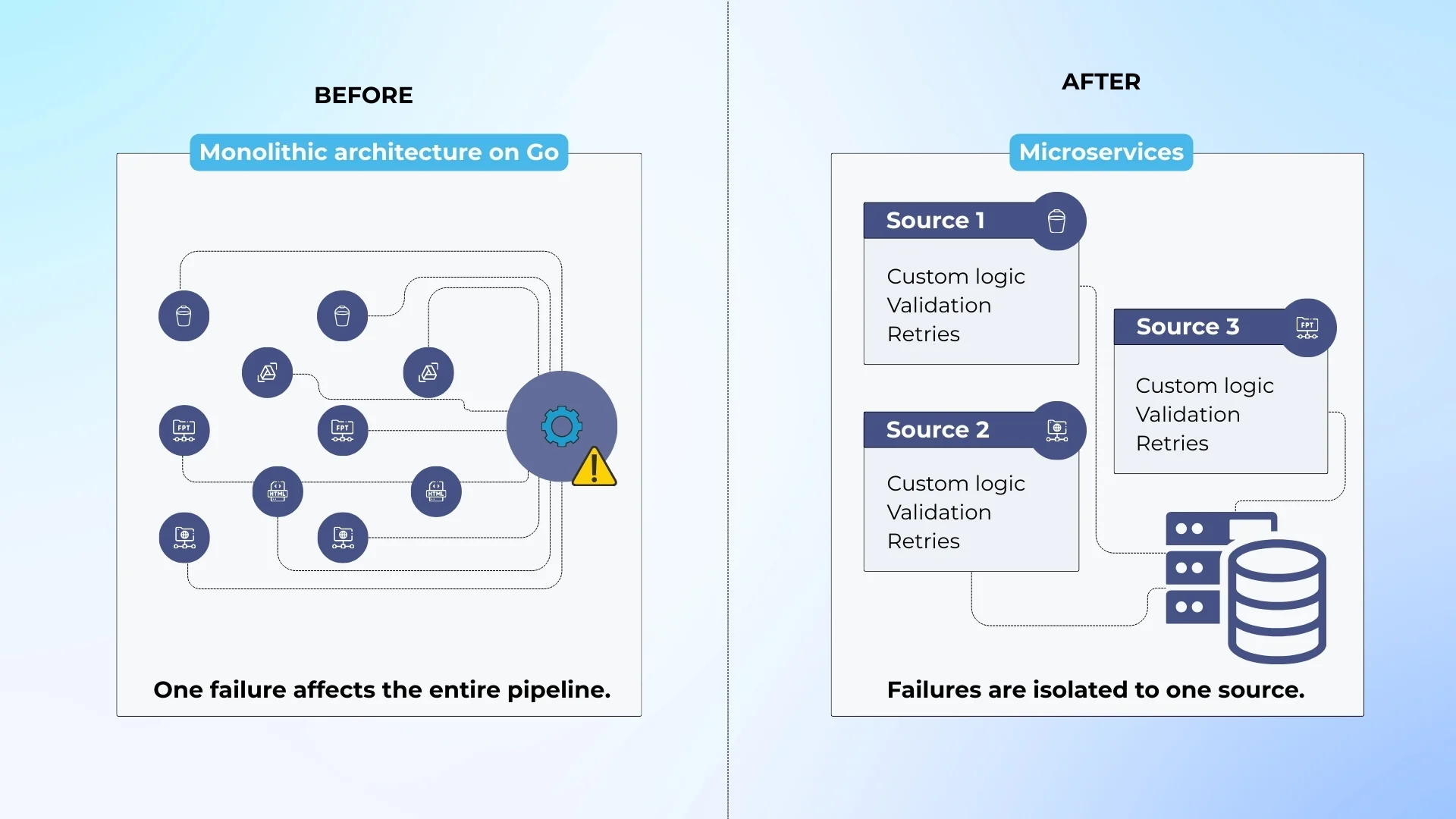

Legacy constraints

An older ingestion system existed but was unreliable and hard to extend. The client required the new system to run on their own servers.

Our Solution to Automating Data Ingestion

We rebuilt the legacy ingestion as a microservices-based, dockerized system. Thanks to this, each source follows its own workflow without affecting others. After file pre-processing, the system triggers Airflow DAG for further data processing.

The system supports:

- Direct downloads from S3, Google Drive

- Authenticated portals

- Dynamic link discovery

- Search-based file generation

- Vendor-delivered files into client storage

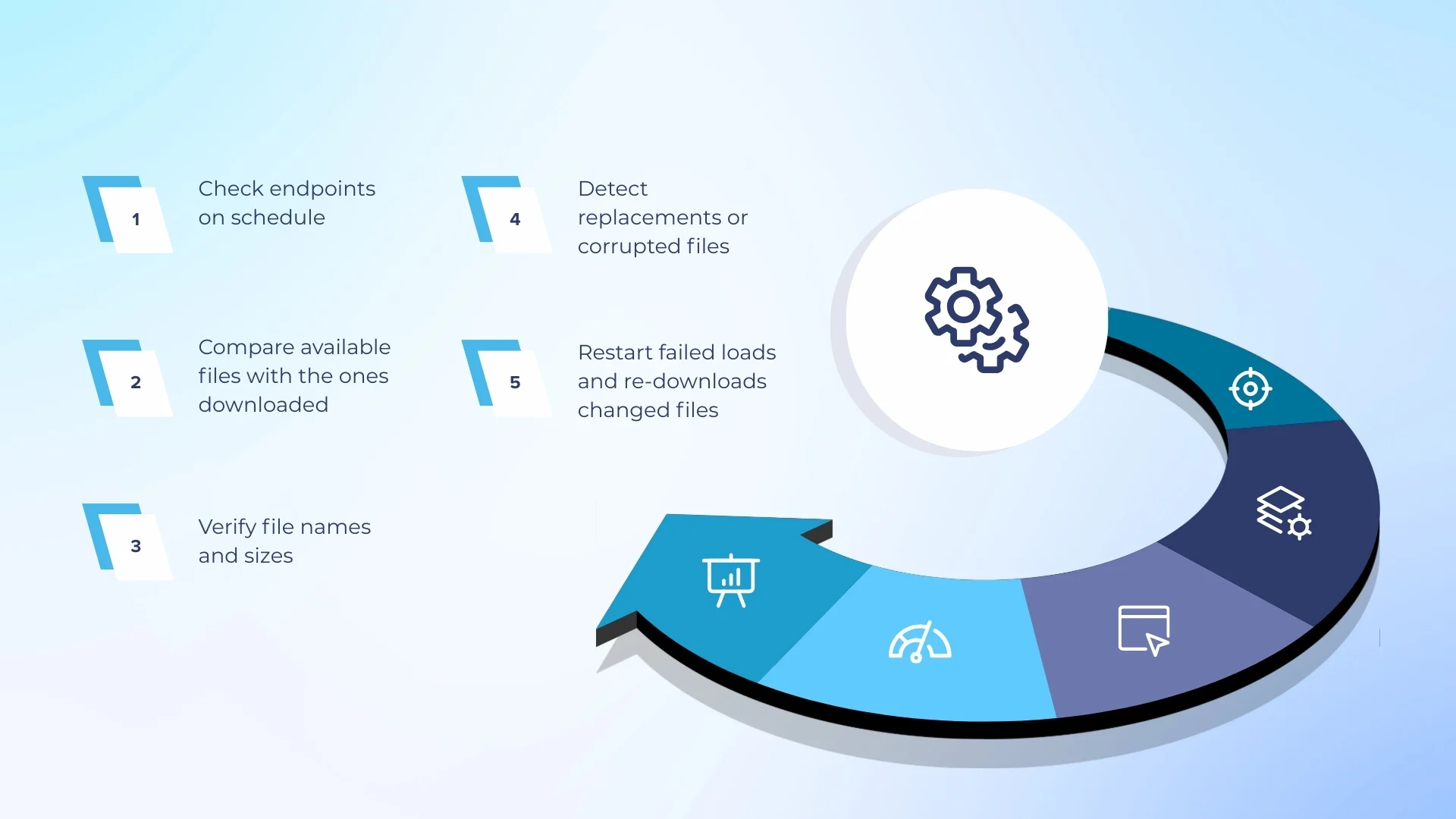

For every source, the system:

- Checks endpoints on a schedule

- Compares available files with what was already downloaded

- Verifies file names and sizes

- Detects replacements or corrupted files

- Re-downloads files when changes are detected

File sizes ranged from kilobytes to hundreds of megabytes. Some update cycles included hundreds of files.

Failures are expected and handled by design.

- Each job allows a limited number of failed file downloads

- Partial failures do not fail the entire job

- Missing files are retried automatically in later runs

- Only unrecoverable cases are marked as failed jobs

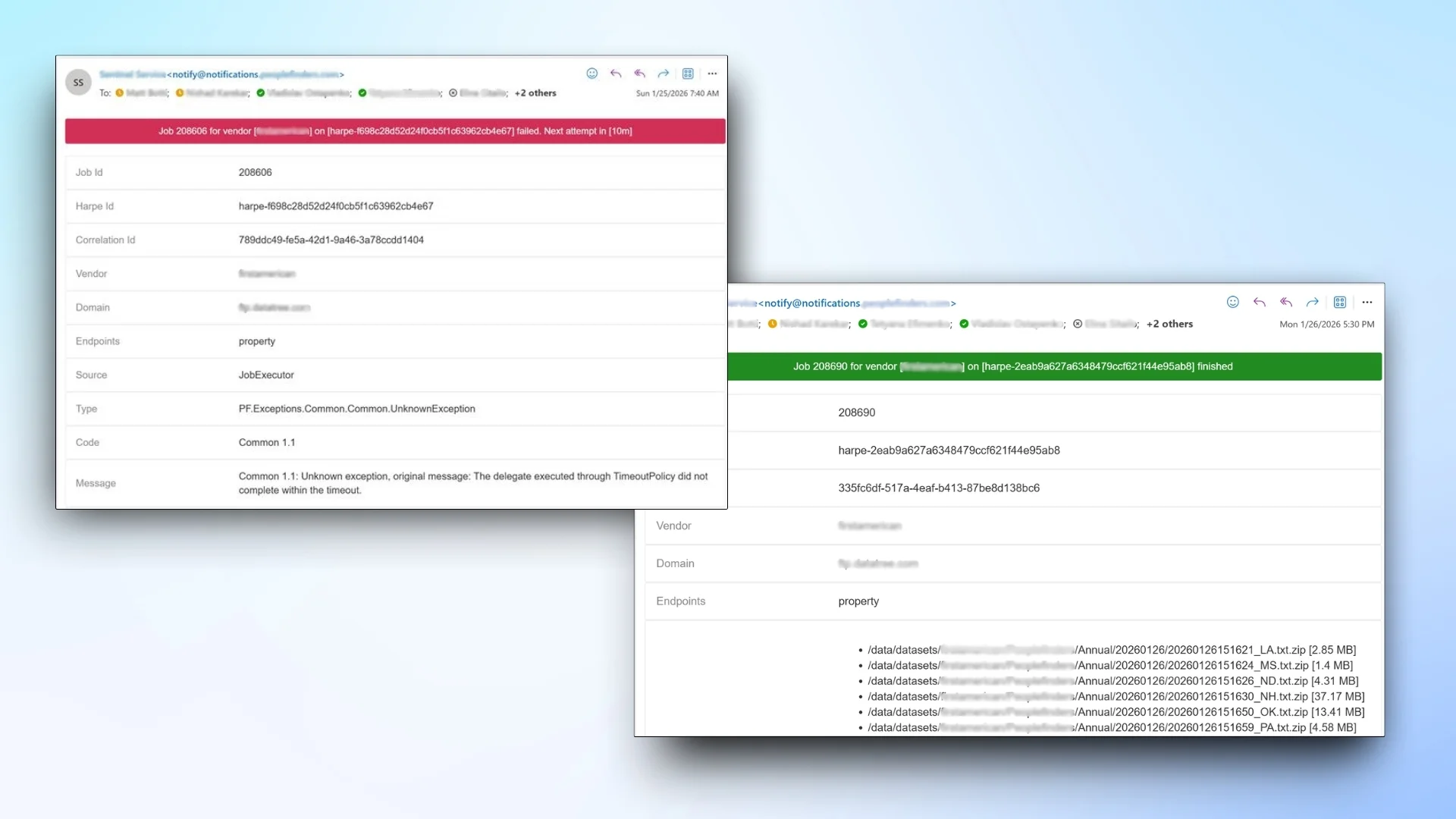

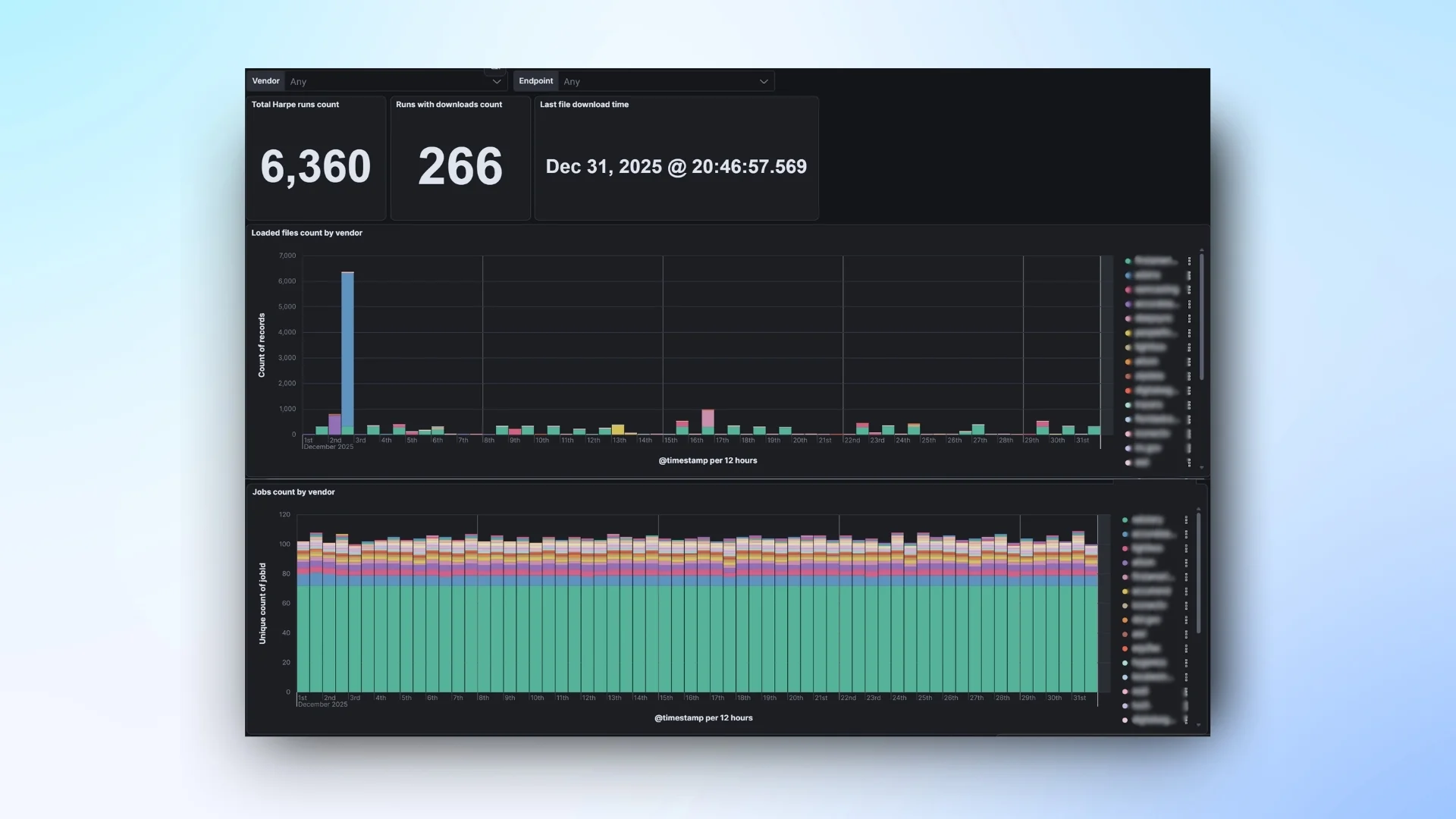

Through the dashboard, our client can track job execution, file downloads, file pre-processing, etc.

Alerts are triggered when:

- Expected data does not arrive

- Jobs fail or time out

- Storage limits are approaching

Key Results of the Data Ingestion Project

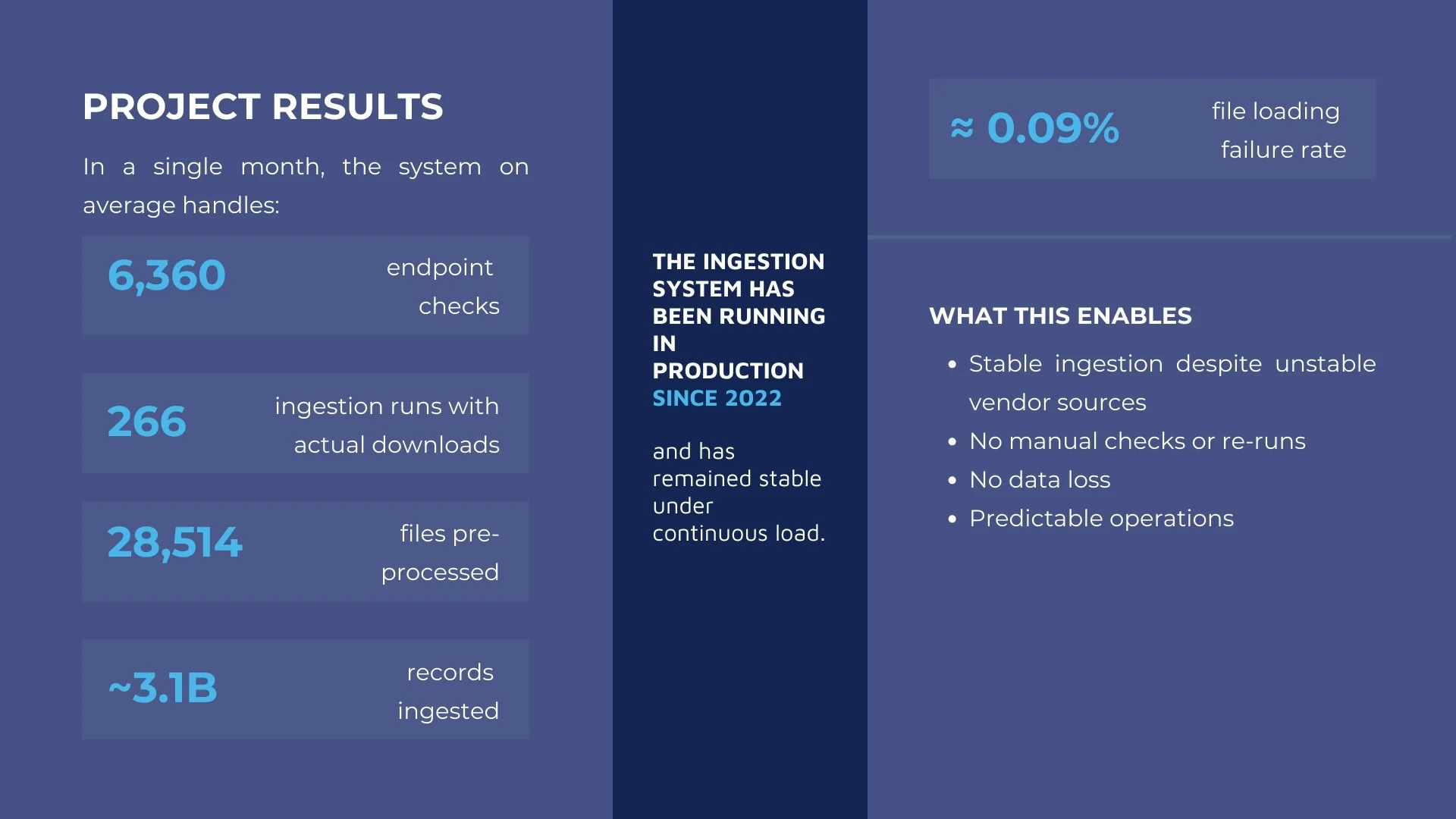

The system has been running in production since 2022 and operates continuously with minimal manual intervention.

The pipeline handles high file volumes and large record counts while maintaining data integrity through continuous verification and re-downloads when files change or are replaced.

- 6,409 ingestion jobs executed in 12 months

- 6 fully failed jobs (≈ 0.09% failure rate)

- 6,360 endpoint checks in one month

- 266 runs with new data downloads per month

- 6,596 files unzipped and 28,514 files processed per month

- ~3.1 billion records ingested in one month