Client Goal and Expectations

The client is a U.S.-based data aggregation company with a consumer-facing product. They wanted to expand their service line with a new search feature based on an additional category of publicly available data. This data was not part of their existing pipeline and required a new collection and processing approach.

Their requirements:

- Monthly, production-ready data feed from 60+ websites

- Fixed CSV schema to avoid downstream pipeline changes

- Continuous monitoring of the data loading flow

- Optimize the flow for better data quality

Why This Was Hard

Source diversity

Data came from 60+ public websites. Each source used its own structure, naming rules, and access logic. There was no shared standard to rely on.

Dynamic websites

Websites changed without notice. Some updates broke loaders. Others shifted fields, producing incorrect data while appearing valid.

Fragmented records

The same person could appear multiple times within one state in different formats. Simple aggregation created duplicates and inconsistencies.

Schema stability

The client’s pipelines depended on fixed CSV structures. Even minor schema changes could disrupt automated ingestion and search logic.

The Solution for This Project

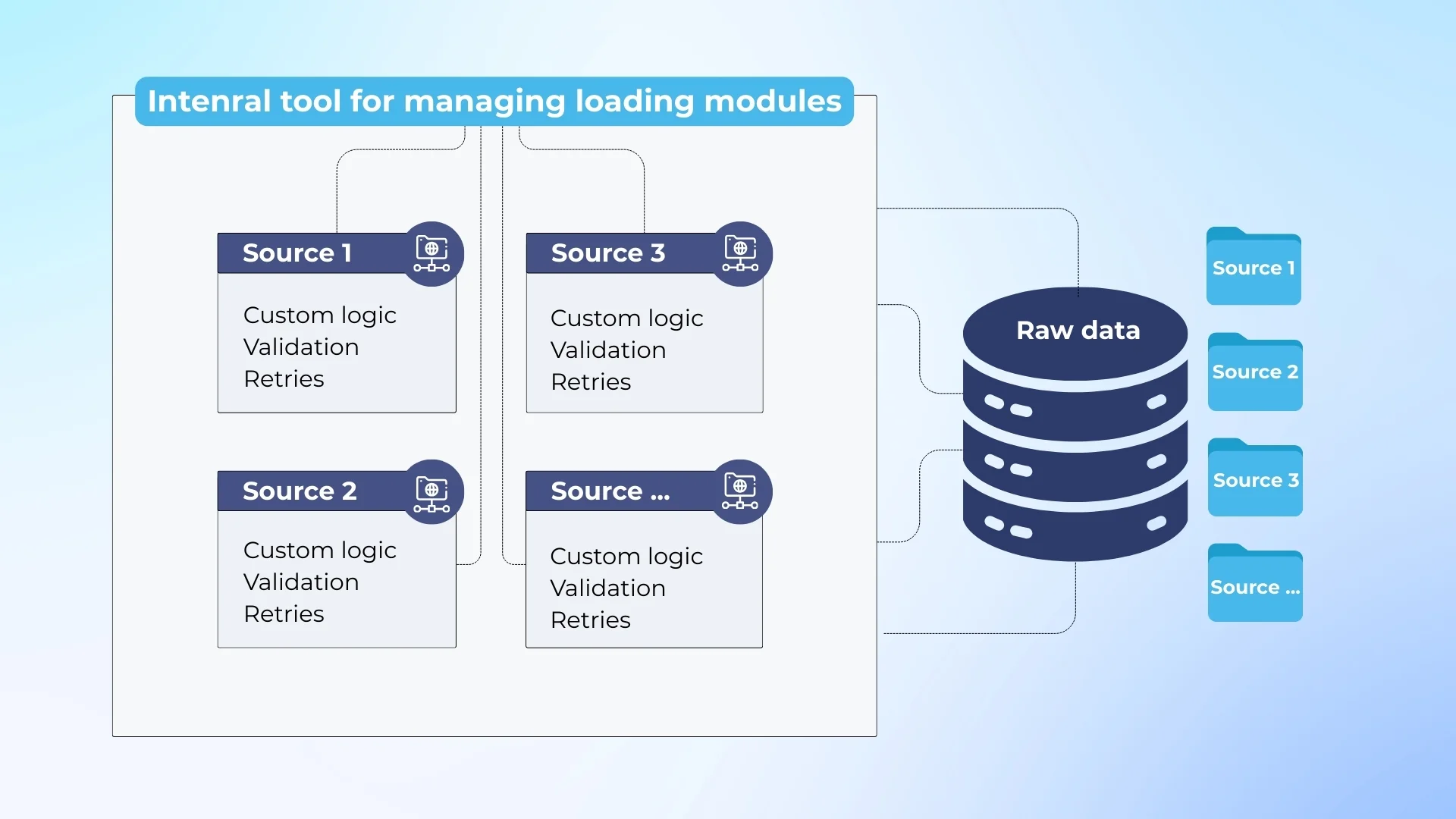

We built a modular ingestion system where each public source is handled independently. This isolates failures, allows targeted fixes, and prevents changes in one source from affecting the rest of the pipeline.

All modules are launched and managed through our internal tooling. It controls execution order, balances concurrent runs, and distributes load across infrastructure to keep monthly processing predictable and cost-controlled.

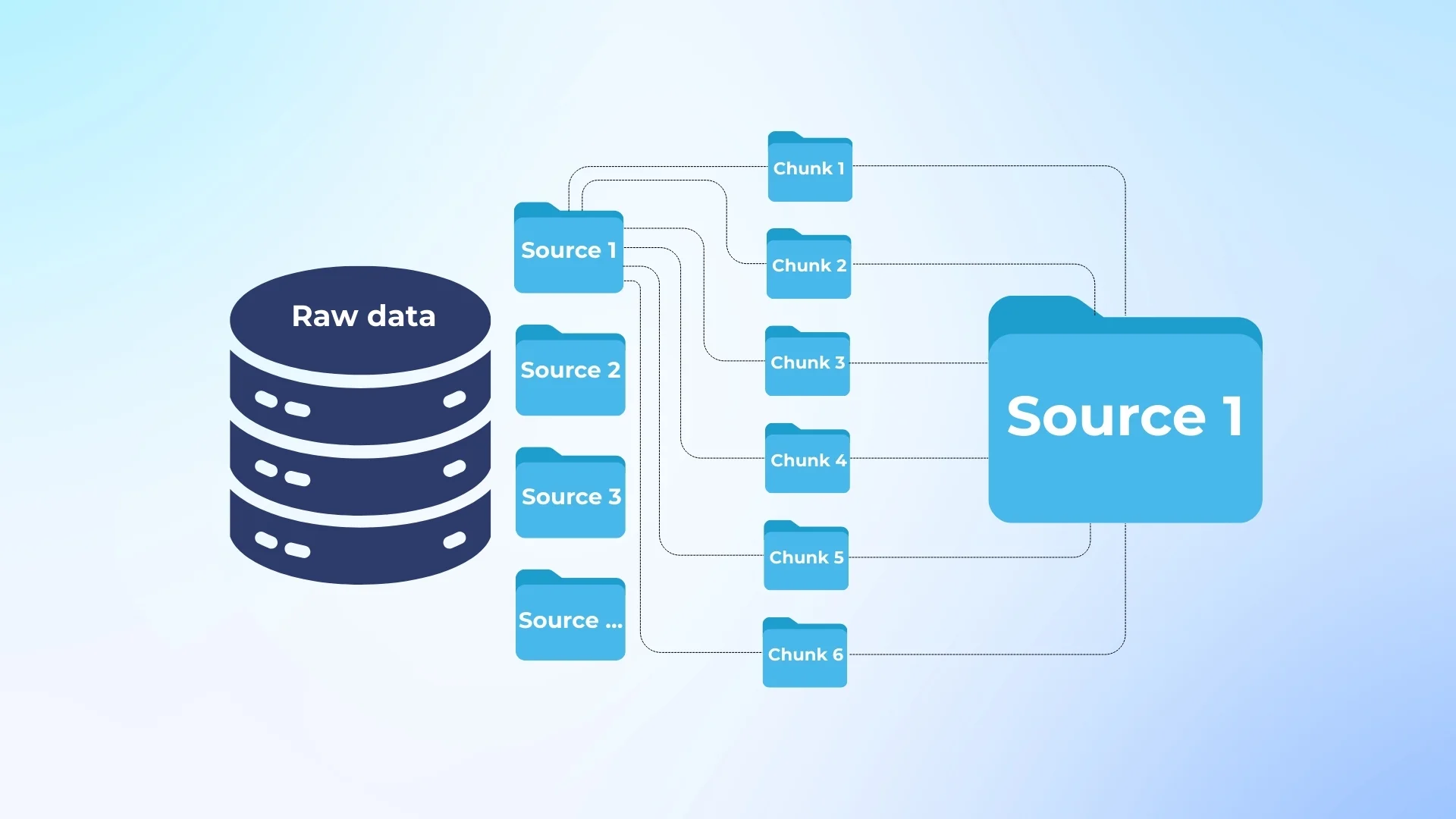

After collection, data is merged at the state level. Fragmented records are grouped, statistics are generated, and internal inconsistencies are identified before delivery.

We normalize text, split compound fields, align formats, and prepare records for consolidation without changing the delivery schema.

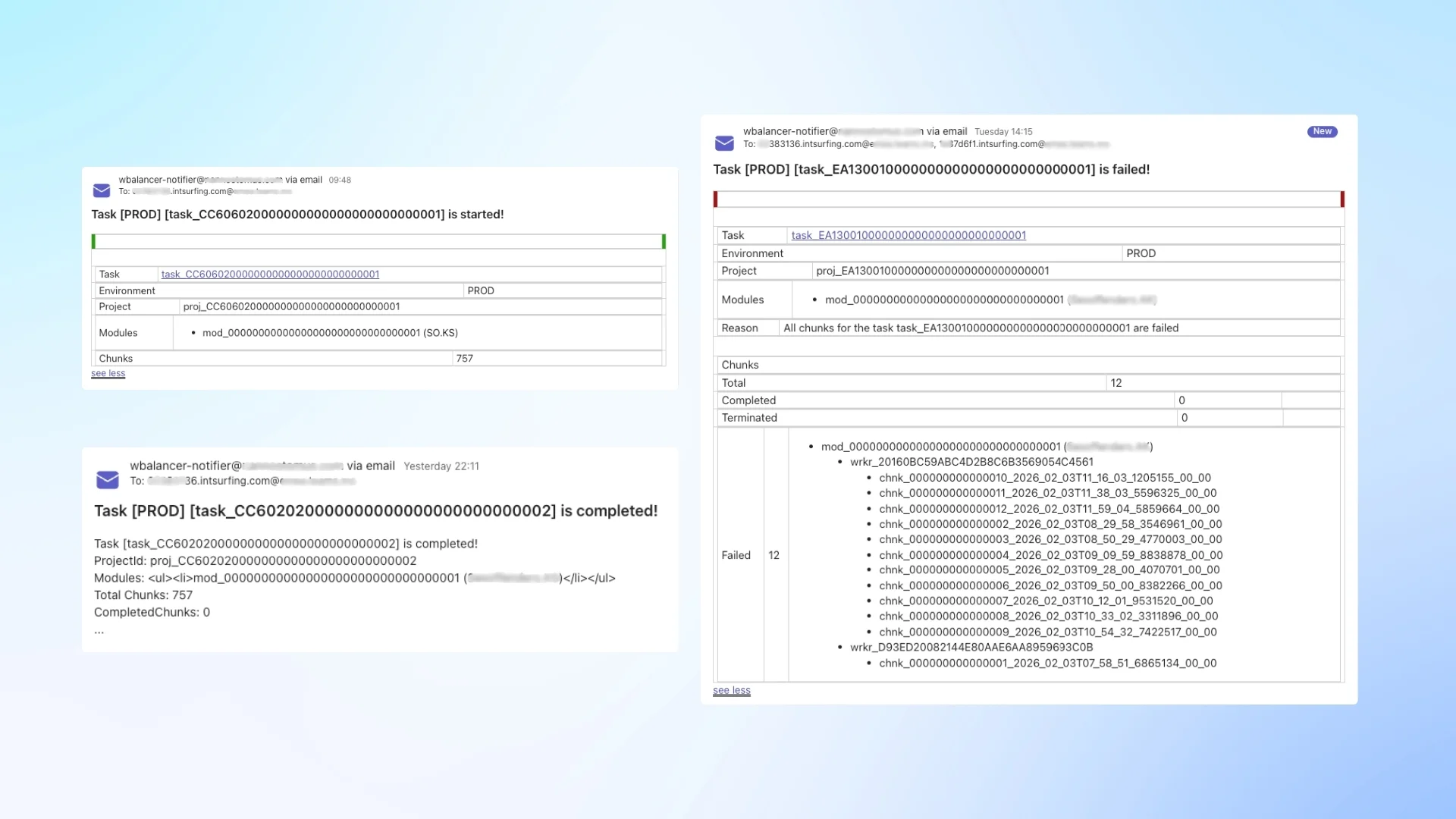

Every run is tracked at the chunk and module level. We compare expected versus actual output per source and generate reports that surface failures, partial loads, and data shifts.

Each processing step produces detailed logs that link errors to a specific source, chunk, and execution stage. This shortens investigation time and allows targeted fixes.

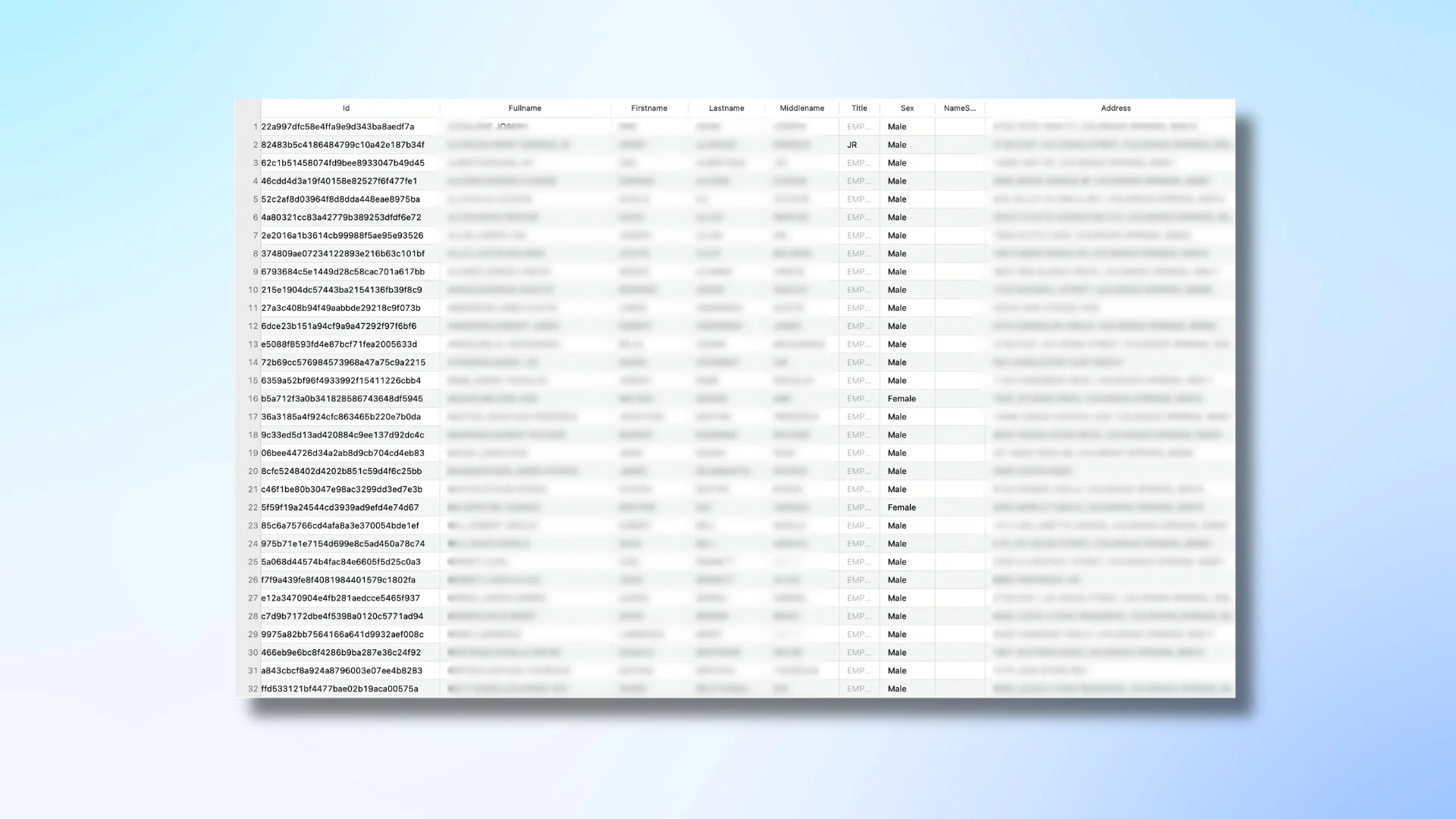

Final datasets are delivered as CSV files with a fixed schema. Structure, field order, and formats remain consistent month over month, allowing the client to search without pipeline changes.

The system has been continuously improved since launch. We optimize execution time, reduce infrastructure usage, and refine processing steps.

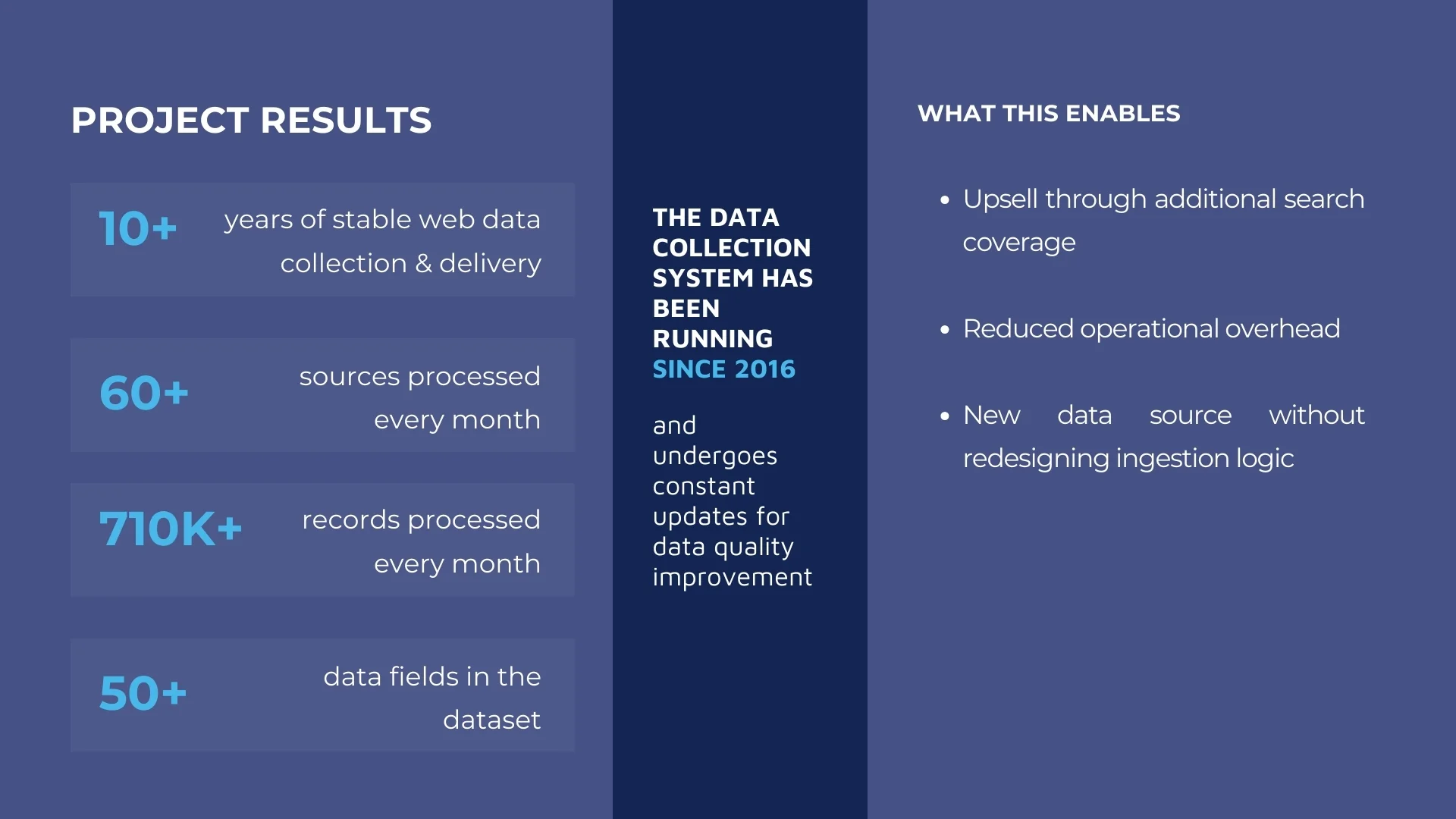

Results of the Project

The new dataset became the foundation for an additional search feature in the client’s consumer product. Equally important, the data flow proved stable in production.

Monthly updates arrived in a fixed structure and ingestion ran without constant intervention.

Business outcomes:

- Enabled upsell through additional search coverage

- Expanded product capabilities without redesigning ingestion logic

- Reduced operational overhead thanks to schema-stable monthly delivery