In 2021, California courts removed date-of-birth information from online search portals to address privacy concerns. For background screening companies, that single change broke long-standing identity matching workflows overnight.

It forced teams to redesign their pipelines, rethink matching logic, and prove compliance to clients who suddenly couldn’t reconcile records.

Data sources shift, regulations tighten, and expectations around accuracy never go away. The only way forward is to build systems that can adapt. The following sections explore how to get there.

Why Data Engineering in Background Screening Is Uniquely Hard

The domain comes with constraints that make the data engineering challenges far more complex than in many other industries.

1. Fragmented and inconsistent public data

In the U.S., there are over 3,000 county courts, each with its own systems, formats, and access rules for criminal records. Some jurisdictions expose APIs, others publish weekly bulk files, while many only provide online portals or scanned docket PDFs.

Court feeds frequently go down or change format, which means engineering teams must detect failures quickly to avoid returning false “no records” results.

Globally, the situation is even more varied.

- European jurisdictions restrict identifiers and require explicit candidate consent under GDPR.

- In Asia-Pacific, identifiers differ (national ID vs. passport), and data residency laws often require regionalized pipelines.

This means designing systems that handle heterogeneous schemas, missing fields, and shifting legal constraints as core requirements.

2. High stakes for accuracy and compliance

Unlike other data domains where “mostly correct” might be acceptable, screening firms operate under the U.S. Fair Credit Reporting Act (FCRA), which mandates “maximum possible accuracy.” A false positive—linking the wrong John Smith to a felony—can cost a candidate their livelihood and expose the employer to litigation. Conversely, missing a serious conviction creates liability for negligent hiring.

This priority shows up in survey data.

HireRight’s 2025 Global Benchmark Report found that accuracy ranked higher than both cost and speed when employers evaluated screening providers. That translates to a focus on lineage, auditability, and precision matching over raw throughput.

3. Volatile and bursty workloads

Hiring is highly cyclical. In retail, hospitality, and logistics, background check requests spike by 50–100% during Q4 and back-to-school seasons. Employers must staff up fast, and the screening infrastructure must handle these surges.

Additionally, the screening market trend toward continuous monitoring is only making workloads more unpredictable.

This means architectures cannot remain static or monolithic.

4. Sensitivity and regulatory complexity

Background checks involve some of the most sensitive categories of PII: Social Security numbers, driver records, addresses, and medical results for certain roles.

Providers must comply with FCRA (U.S.), GDPR (EU), CCPA (California), plus state-level restrictions. For instance, California’s 2021 rule that removed date-of-birth visibility from online court searches forced identity-matching engines to shift toward alternative features, i.e., case numbers and address histories.

Besides, systems must be privacy-by-design: encrypting PII at rest and in transit, tokenizing identifiers, segregating production access, and keeping full audit trails.

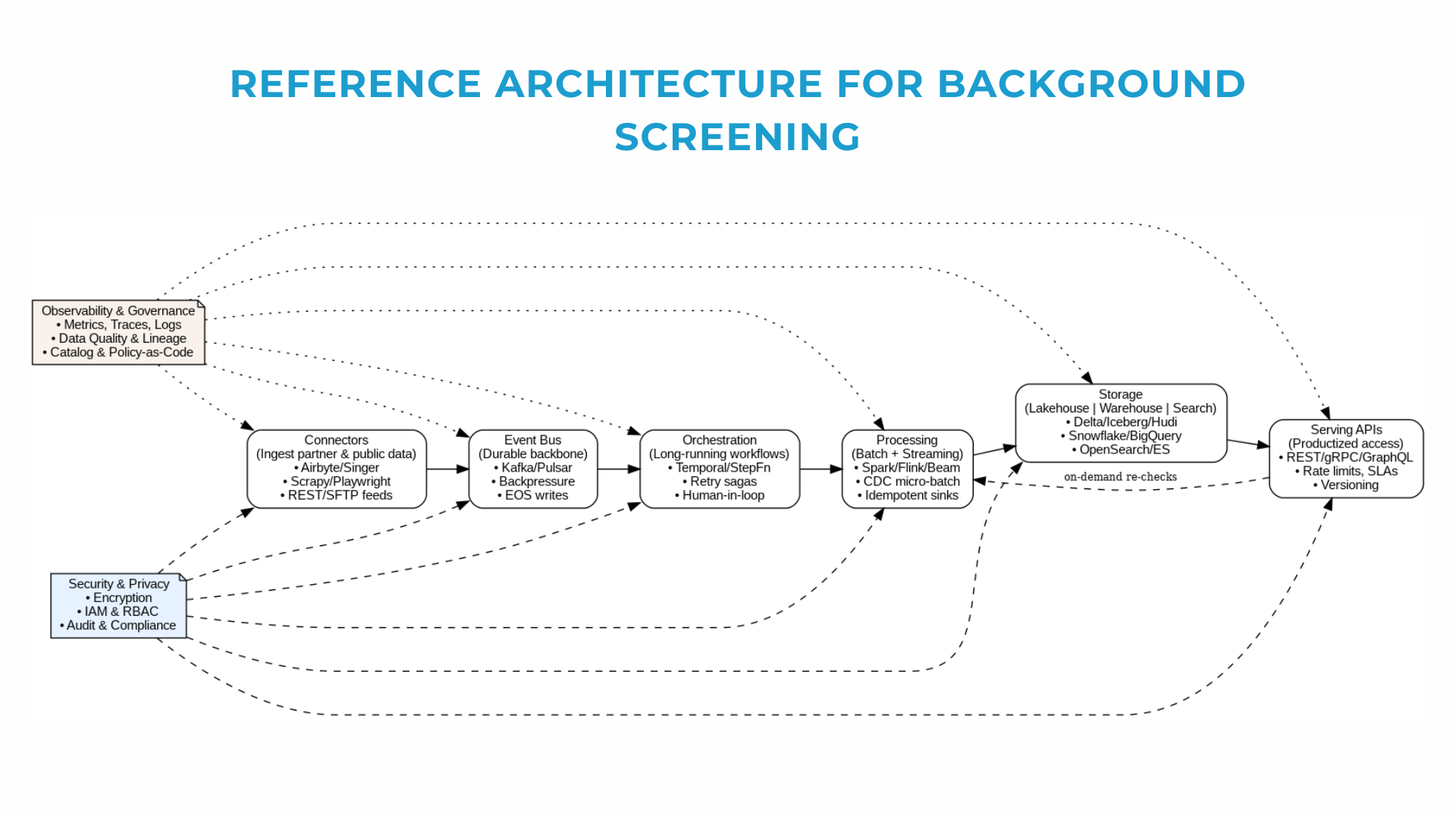

Reference Architecture at a Glance

Modern background screening platforms are data-driven. You ingest unstructured public records and partner feeds, orchestrate long-running workflows, process both streams and batches, and serve results with strict SLAs. All while proving lineage and protecting PII.

Core principles behind the design:

- Idempotence. Every job or record can run more than once without changing the result.

- Exactly-once semantics. Each subject is processed once, no matter how many retries happen under the hood.

- Lineage everywhere. You should always be able to trace where a field came from and what transformations it went through.

- Human-in-the-loop edges. Automation runs 95% of the flow, but workflows must pause when a case needs human review.

In the following sections, we’ll unpack each layer of this reference architecture.

Ingestion: Connectors, Schemas, and Change Resilience

Every background screening system lives or dies by its ingestion layer. If data doesn’t come in reliably, nothing downstream (from record matching to reporting) can be trusted. The challenge is that sources are fragmented, inconsistent, and constantly changing.

The source landscape

Screening platforms typically deal with three kinds of feeds.

- Public records: courts, sex offender registries, licensing boards.

- Partner APIs: motor vehicle records, sanctions lists, payroll or education verifications. They’re structured but come with strict quotas and rate limits.

- Client feeds: ATS or HRIS exports. Often just CSVs or JSON files, these arrive with inconsistent schemas or missing fields.

The diversity of inputs means ingestion can’t be a single connector or data pipeline—it has to be a strategy.

Engineering playbook

Teams build resilience by leaning on a handful of proven patterns. Connector SDKs allow modular, versioned code that can be reused across sources. Schema evolution (using Avro or JSON with versioning) helps absorb field changes without breaking pipelines. Incremental fetches minimize load, while backfill strategies ensure history can be reconstructed when needed.

For public websites, crawling comes with its own rules of the road: respecting robots.txt, slowing down to avoid bans, and detecting DOM changes early.

Building resilience into ingestion

Even with good patterns, real-world feeds fail. APIs change response formats. Courts update their websites overnight. Client CSVs arrive empty or with columns swapped. That’s why ingestion needs to be designed for failure, too.

- Change detection systems flag schema drift before it propagates.

- Retries with exponential backoff handle transient outages.

- Dead-letter queues keep bad records from blocking the flow.

- Snapshots of HTML or PDFs provide audit trails, which are critical when regulators or clients ask how a record entered the system.

- Source health SLOs make sure silent failures don’t linger for weeks.

With data ingestion stable, the next challenge is coordinating what happens afterward: retries, compensations, escalations, and human reviews. And we’ll cover it in the next section.

Orchestration: Long-Running, Human-in-the-Loop Processes

A candidate’s data might trigger searches across different courts, an API call to a motor vehicle database, a request for employment history, and then pause until a human adjudicator decides whether a record is reportable. Orchestration is the layer that holds this all together.

Unlike simple ETL jobs, background screening workflows are long-running, stateful, and sometimes unpredictable. A county feed may respond in seconds, while an education verification can take days. APIs fail and need retries. Some steps can be automated, others need manual review. Without orchestration, these processes devolve into brittle scripts and ad hoc fixes.

Engineering teams typically choose between two orchestration styles:

- DAG schedulers (Apache Airflow). Ideal for data-centric jobs: nightly scrapes, schema validations, batch updates. They’re good at managing dependencies between jobs and running at scale, but less suited for long-lived processes.

- Workflow engines (Temporal or AWS Step Functions). These fit better when workflows can run for hours or days, and when you need robust failure handling. They support retries, timeouts, and compensating actions. Temporal, for instance, allows workflows to “pause” while waiting for a human decision, then resume exactly where they left off.

The right choice often isn’t either/or: Airflow may run your daily ingestion pipelines, while Temporal coordinates per-candidate checks that can stretch across systems and timeframes.

Processing: Batch, Streaming, and Hybrid

In background screening, how do you process data? There isn’t a single answer. Some use cases demand near real-time responsiveness. Others are better suited for scheduled jobs. The reality is that most platforms end up running a mix of both.

Why Batch Still Works?

Many public sources provide data only in bulk drops. A state court may publish a weekly file of new convictions, or an education provider might send daily CSVs. These need to be processed in batches: parsed, normalized, and integrated with existing records. Batch jobs also make sense for historical rebuilds. For example, for reprocessing an entire registry after a schema change. The trade-off is latency: results arrive hours or days later, not instantly.

Where Streaming Works Better

On the other end of the spectrum, employers increasingly expect fast answers. If a candidate is flagged on a watchlist or an employee gets a new criminal charge, the system should react within minutes. Streaming pipelines make this possible by processing events as soon as they’re available. They’re essential for continuous monitoring, where updates are pushed from sources or detected via change-data capture (CDC) rather than pulled in bulk.

Hybrid Is the New Normal

In practice, few screening systems run purely in batch or purely in streaming. Most combine the two:

- Streams for deltas and high-priority events.

- Batches for slower or bulk sources.

- Compaction jobs that reconcile both into a clean, consistent index.

This hybrid design balances latency, cost, and reliability. Streaming ensures freshness, while batch keeps costs under control and simplifies integration with legacy sources.

|

Mode |

Latency |

Cost profile |

Complexity |

Best for… |

|---|---|---|---|---|

|

Batch |

Hours to days |

Lower (bulk, scheduled runs) |

Simpler scheduling |

Bulk drops, historical rebuilds, legacy feeds |

|

Streaming |

Seconds to minutes |

Higher (constant compute, infra overhead) |

More complex (stateful processing, checkpoints) |

Continuous monitoring, alerts, fast SLA checks |

|

Hybrid |

Mix of near-real-time + scheduled |

Balanced (streams for deltas, batch for bulk) |

Highest (coordination required) |

Modern platforms needing both freshness and scale |

Storage & Access: Lakehouse, Warehouse, and Search

Processing produces value only if the results can be stored and retrieved in the right way. In background checks, this means designing for very different access patterns. Some queries demand large-scale analytics over millions of records. Others require sub-second answers to a single candidate check. No single system covers it all, which is why modern platforms combine lakehouse, warehouse, and search layers.

Each storage type serves a different purpose:

- Lakehouse ensures reliability and consistency.

- Warehouse enables analysis and oversight.

- Search delivers speed for client-facing queries.

Storage layers make data usable, but they aren’t the end of the story. The next step is how data is delivered to customers and internal systems through APIs. And it’s what we’ll cover next.

Serving APIs: Productized Access

APIs connect your system to client applications, ATS/HR integrations, and your own internal services. For most customers, the API is the product: if it’s unreliable, slow, or poorly documented, nothing else matters. Thus, they need the same care as any customer-facing feature:

- Authentication and authorization ensure only the right clients access sensitive data.

- Rate limits and quotas protect the platform during traffic spikes.

- Versioning allows evolution without breaking existing integrations.

- SLAs and monitoring set clear expectations and provide evidence when things go wrong.

A background screening platform’s value is measured at the API. No matter how well ingestion, orchestration, and storage are built, the customer only sees the response time, reliability, and clarity of that single call.

Treat APIs as products with lifecycles, guarantees, and user experience goals, not just endpoints bolted onto the system.

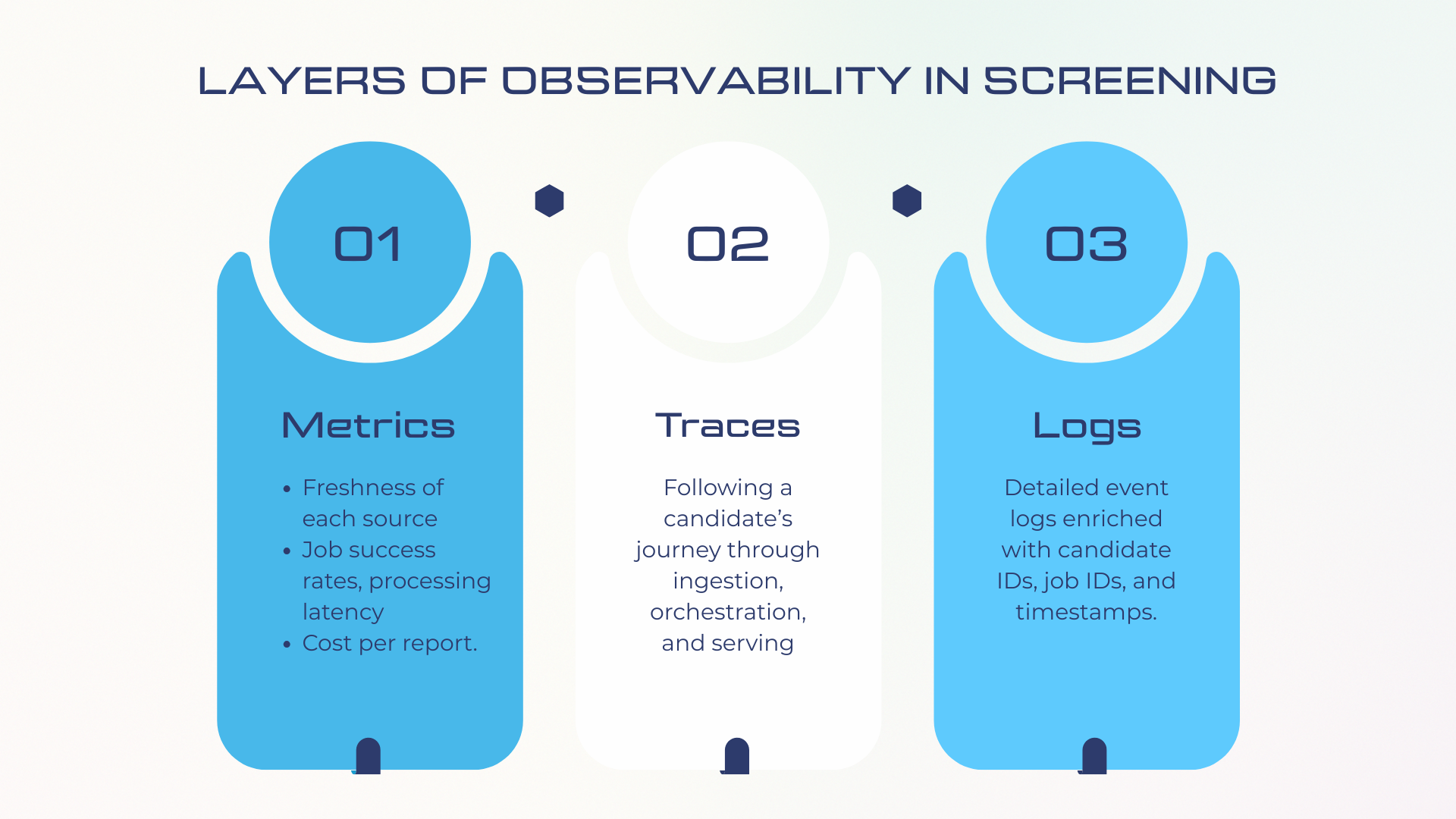

Observability & Governance: Reliability as a Feature

Gartner predicts that by 2026, 50% of enterprises with distributed data architectures will use data observability tools for pipeline visibility—up from under 20% in 2024.

So, industry expectations around reliability and data trust are growing.

Clients, regulators, and internal teams need transparency: Is data fresh? Were all sources checked? Can we trace where a field came from? Observability and governance turn those questions into measurable answers.

Observability in a screening context covers three layers.

Observability alone isn’t enough. Screening platforms must also govern the data. That means:

- Data quality validation to ensure consistency, completeness, and accuracy before data enters downstream workflows.

- Lineage tracking to answer: Where did this record come from? What transformations did it go through? That line-of-sight is vital during audits or client challenges.

- Codified rules around retention, anonymization, and data access ensure that compliance is built into the pipeline.

With observability and governance baked into your platform, the final safeguarding layer remains—security and privacy. Screening involves some of the most sensitive data out there, and protecting it at every layer is the top priority.

Security & Privacy: Defense in Depth

Background screening platforms process some of the most sensitive data imaginable: Social Security numbers, addresses, dates of birth, driving records, employment histories. A single breach means lost trust, regulatory fines, and legal exposure. That’s why security and privacy are the foundation of the architecture.

FCRA (U.S.), GDPR (EU), and CCPA (California) set strict rules on how the data is used. But even beyond compliance, clients expect screening providers to demonstrate that data is protected end-to-end.

These are the core practices every platform needs:

- Encryption everywhere – data encrypted at rest and in transit with modern standards (AES-256, TLS 1.2+).

- Tokenization & masking – sensitive fields (SSN, DOB) are replaced with tokens or masked when full visibility isn’t required.

- Secrets management – Vault or AWS KMS keep keys and credentials out of codebases and logs.

- Least privilege access – role-based or attribute-based access control ensures staff and services only see what they must.

- Immutable audit logs – every access or change is recorded and stored securely, providing evidence in case of disputes or audits.

Good security controls are not enough without privacy practices embedded into the pipeline itself. That means:

- PII masking in logs – developers should never see raw SSNs when debugging.

- Anonymized test data – QA and staging environments shouldn’t use real candidate information.

- Retention and deletion rules – data purged after defined timeframes; user requests for deletion/export must flow through the system reliably.

- Consent management – explicit tracking of when and how candidate consent was obtained, tied to the specific checks performed.

In screening, you don’t get second chances on security. Building trust means making protection visible — encryption badges, audit trails, compliance certificates, and demonstrable privacy controls.

Conclusion

It’s easy to think of background screening as a speed game, but the winning platforms balance speed with control. If your pipelines can’t explain where data came from, how it was processed, and why a record appeared in a report, you’re carrying risk.

Speed matters, but confidence and transparency are what turn a data pipeline into a platform clients actually trust.