Let’s say, someone on your team finds a public website with data that looks useful.

But before anyone commits engineering time, there are usually a few questions:

- Can this site realistically become a reliable data source?

- What does the data actually look like once it’s extracted?

- How difficult will ongoing collection be?

- And most importantly, is this worth allocating engineering effort right now?

At some point the discussion usually narrows down to:

Should this website become part of the production data collection pipeline?

Before making that decision, it often helps to validate web data source first.

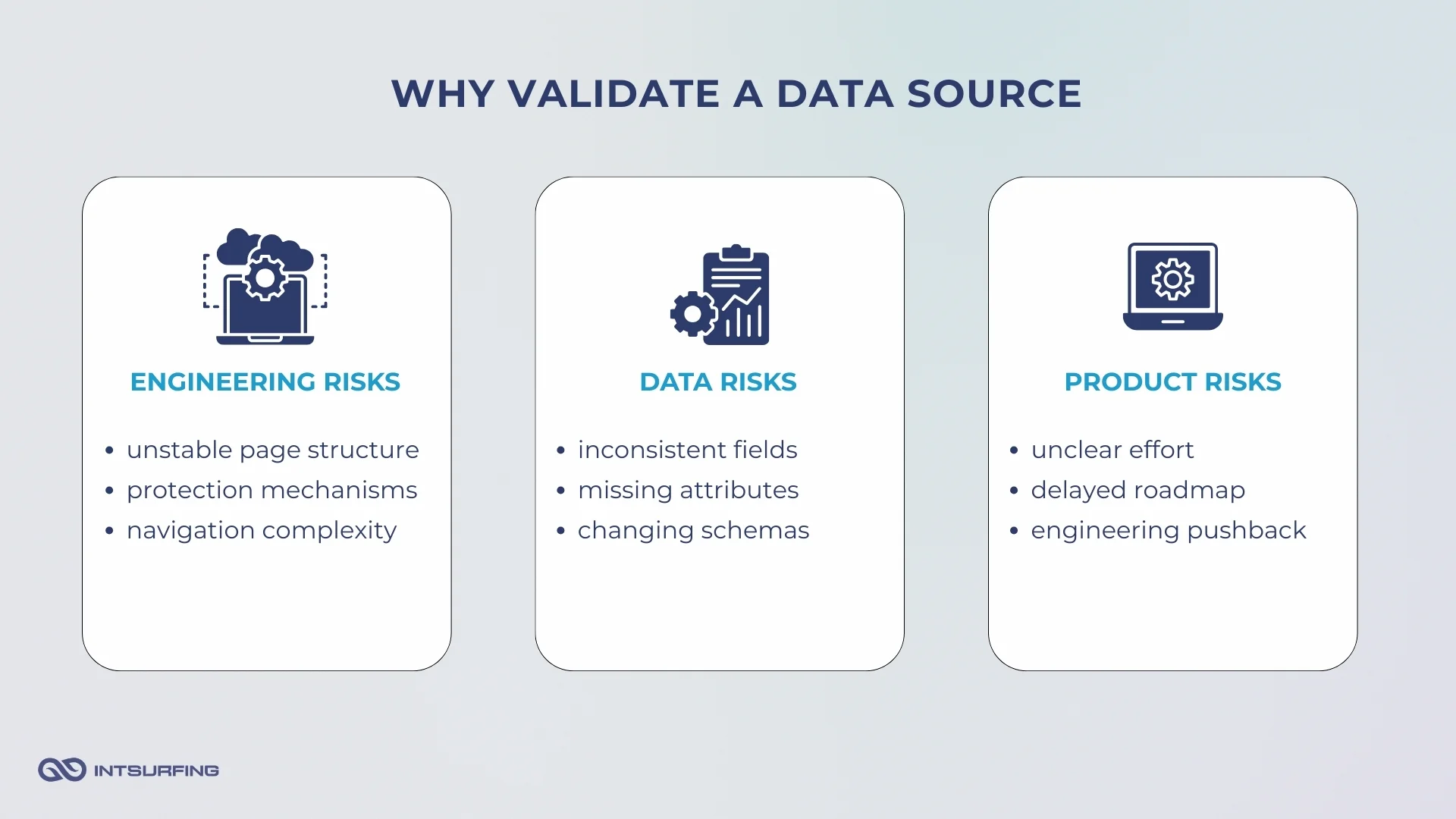

Why Validating a Data Source Early Matters

When a new website appears as a potential data source, the biggest unknown is the scale of work behind it.

For example, in one of our projects for a vehicle parts procurement platform, we tested automated data collection from a website that exposed records through an API with strict rate limits. The data scope contained 68,212 records. When we calculated the allowed request rate and the number of requests needed to load all those records (more than 61M!), collecting the data using one VM would have taken 2,131 days.This completely changed the team’s priorities. They decided to focus on a different data resource first.

So, in most cases, the question isn’t whether the data can be collected. It’s how difficult it will be to collect and maintain this source over time.

What Teams Usually Do When Evaluating a New Data Source

In most companies, someone on the team — often an engineer or a data platform specialist — starts exploring the site. They inspect the page structure, identify where the fields are located, and follow the navigation logic between listing pages and detail records.

Then they start testing a website as a data source. A loading module is written to pull sample records.

Within a couple of days, this usually answers the first technical questions. But this internal approach comes with a cost.

Until someone completes this exploration, it is hard to estimate whether the source will require a few days of work or several weeks of engineering effort.

Even a small test takes a few days of engineering time — time taken from roadmap work. And early prototypes rarely reveal the full complexity. Issues with field consistency or navigation often appear only when the dataset grows beyond the first few hundred records.

Because of this, some teams start with a small external test of the source before deciding how to move forward.

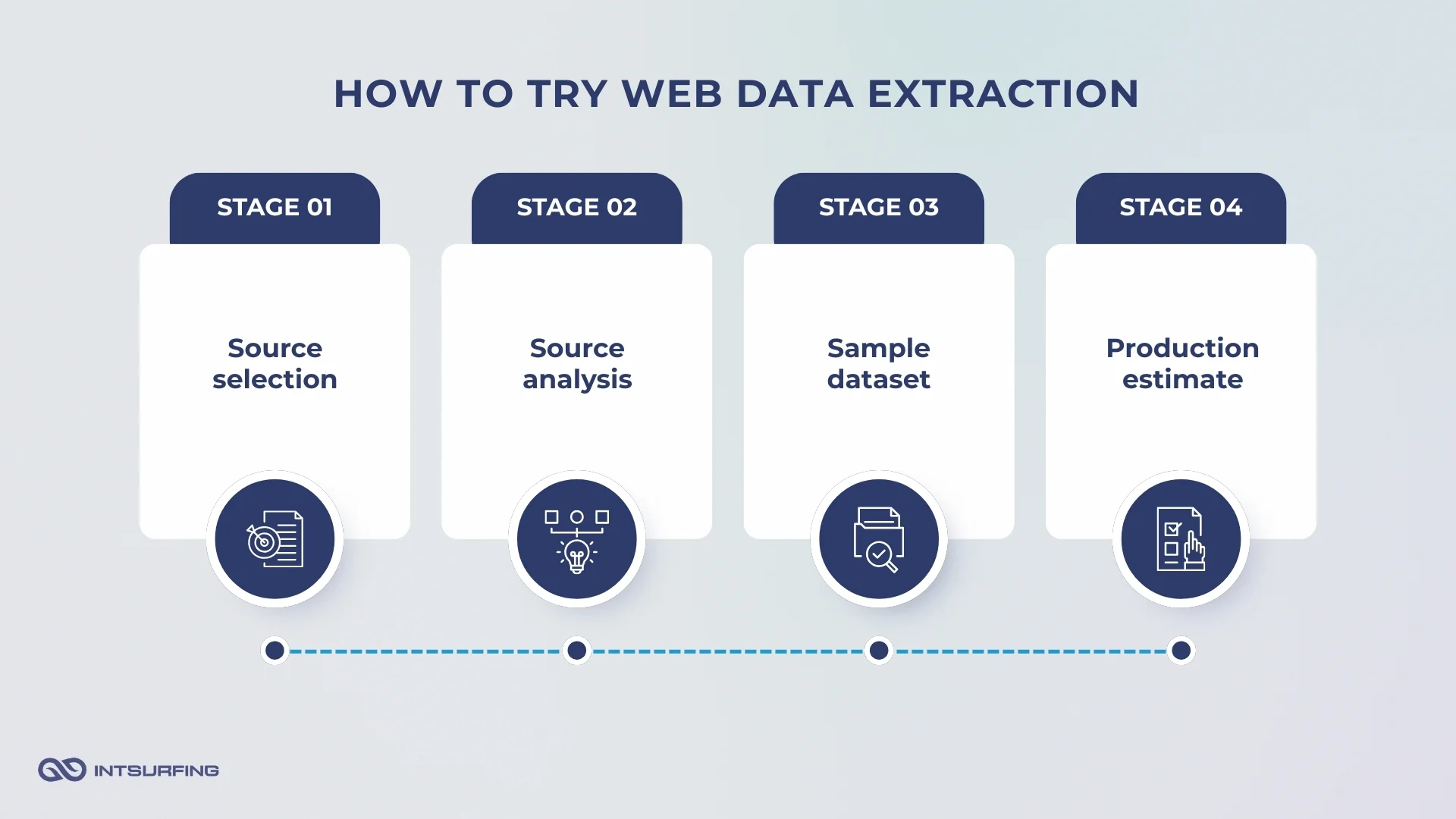

Try Web Data Collection on Your Source

Instead of assigning engineers to explore a new source, you can start with a short validation by Intsurfing.

Cost: $0 Timeline: 1–5 business days

Step 1 — Source selection

You send one website that contains the data you want to collect. This can be a directory, catalog, registry, or any public site with structured information.

Step 2 — Source analysis

Our engineers study the source before collecting anything. They review:

- page structure

- navigation logic (pagination, nested pages)

- available data fields

- technical constraints that may affect automated collection

Step 3 — Sample dataset

We build the data loading module used to collect the sample dataset. You receive:

- structured data in CSV or JSON

- real records from the website

- a clear view of available fields and data formats

Step 4 — Production estimate

Finally, we prepare a short assessment of what production collection would involve. This includes:

- notes about technical complexity

- estimated engineering effort

- expected timeline for production setup

- an approximate project budget if you decide to outsource ongoing data collection and maintenance with us

At this point, your team has enough information to decide whether this source should become part of the production data pipeline.

If you later decide to outsource the production data collection and maintenance, the module we developed for the test becomes the starting point for the full pipeline.

What You Learn from This Test of Web Data Collection

A short validation test answers the questions that usually block decisions around a new data source.

Within 1–5 business days, the team gets a clear picture of what working with this source would actually involve.

Engineering teams understand:

- whether the page structure is stable

- how complex navigation is (pagination, nested pages)

- what technical constraints may affect automated collection

- potential maintenance risks if the site changes

Data teams see:

- the actual dataset extracted from the site

- available fields and formats

- missing attributes or inconsistencies

- whether the data can feed existing ingestion pipelines

Product leaders learn:

- whether the source is realistic for the roadmap

- how long production setup may take

- whether the expected value justifies the effort

If You Decide to Outsource the Production Data Collection to Intsurfing

Let’s say the validation confirms the source is worth pursuing, and your team decides it makes more sense to outsource the production work to our developers.

Here is what happens next.

1. We sign the agreement

We sign a service agreement through DocuSign (or any tool your team prefers).

The signed document is then sent to the bank for standard verification. This usually takes 1–3 business days.

2. Payment setup

Once the bank confirms the agreement, transfers can start according to the terms.

We issue a standard invoice so your accounting team can process it easily.

3. Production data collection starts

While the paperwork and transfer is in progress, we continue the work.

The module built during the validation test becomes the foundation for the full data collection, so we don’t start from scratch.

4. Data delivery

When the dataset is ready, we prepare it for delivery and load it to the destination your team prefers — for example Amazon S3, cloud storage, or an internal system.

5. Optional data quality work

If needed, we can also process the data further:

- entity resolution

- cleaning and normalization

- parsing and standardization

- validation checks

6. Maintenance

If the source requires continuous updates, we simply keep the pipeline running.

Billing can be arranged monthly, quarterly, or annually, depending on the project.

Starting Website Data Extraction with a Small Test

Starting web data collection from websites with a small test helps when a new site appears as a potential data source, but the team still lacks clarity about the real effort behind it.

Maybe the product team needs the data quickly, but engineering capacity is limited.

Maybe the data platform team wants to see the actual dataset before building ingestion pipelines.

Or maybe leadership simply needs a realistic estimate before allocating budget and roadmap time to collect data from websites.

A short validation removes much of that uncertainty. Your team sees real records, understands the structure of the data, and gets a realistic view of the work required.

If you are evaluating a new website as a data source, start with a quick validation.

Send one source at contact@intsurfing.com.

Receive a structured dataset and a production estimate within a few days.